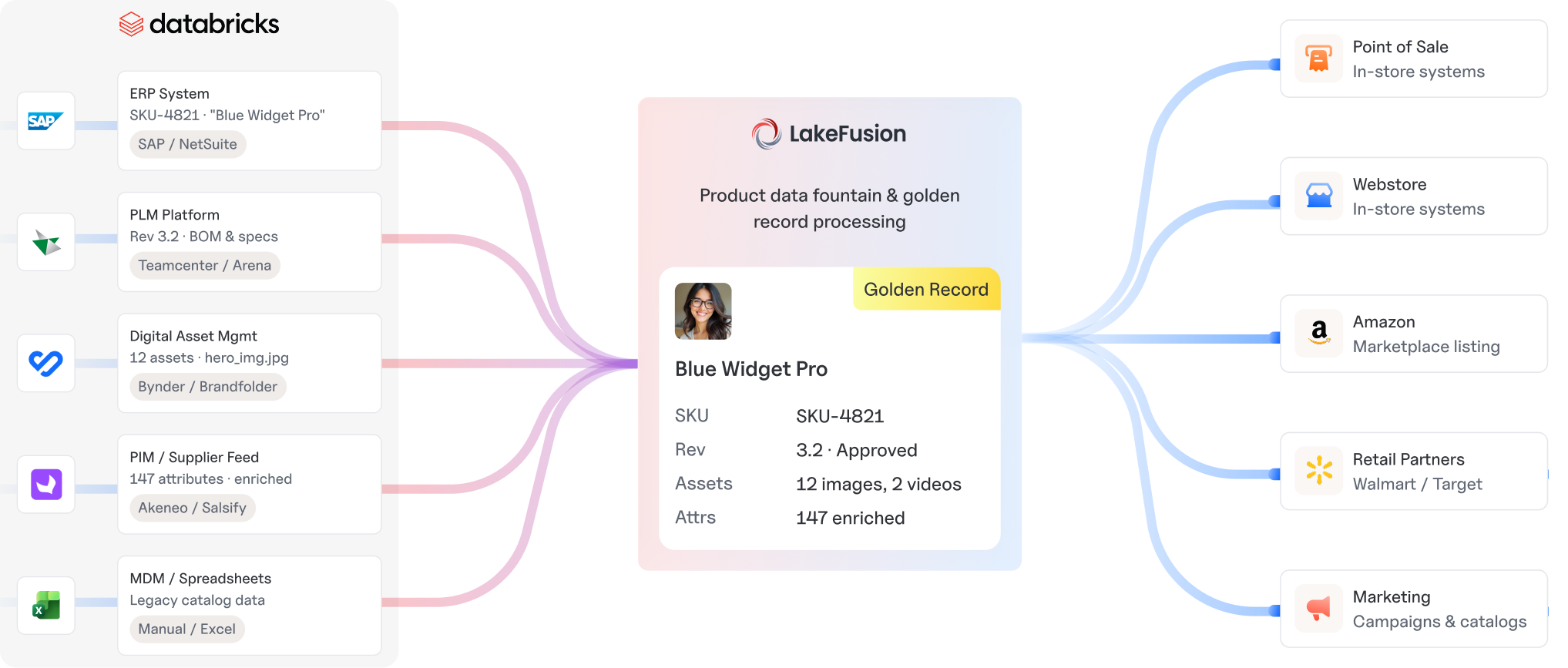

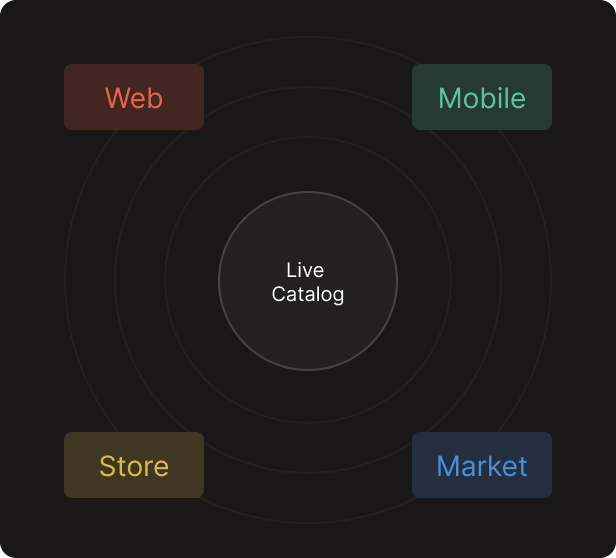

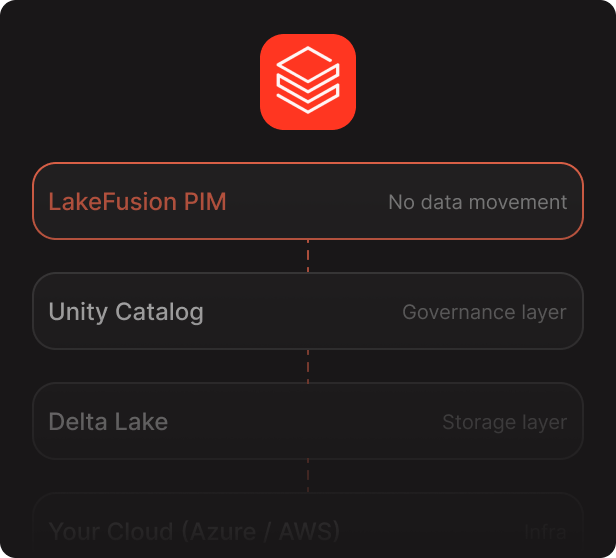

LakeFusion PIM is positioned to work within the data

environment your team already trusts, reducing the

need for disconnected product data workflows and

duplicate infrastructure.

Use AI-assisted match-merge pipelines to onboard,

standardize, govern, and enrich product data more

efficiently across enterprise workflows.

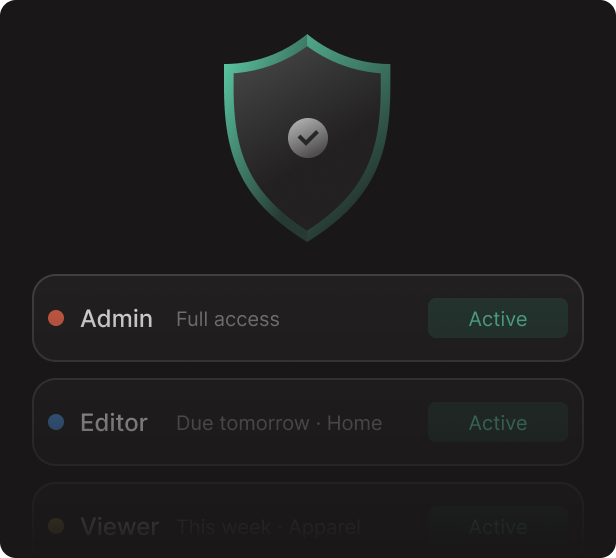

Configurable role-based access, business-friendly

interfaces, and practical modeling tools help business

teams work with product data more confidently and

consistently.

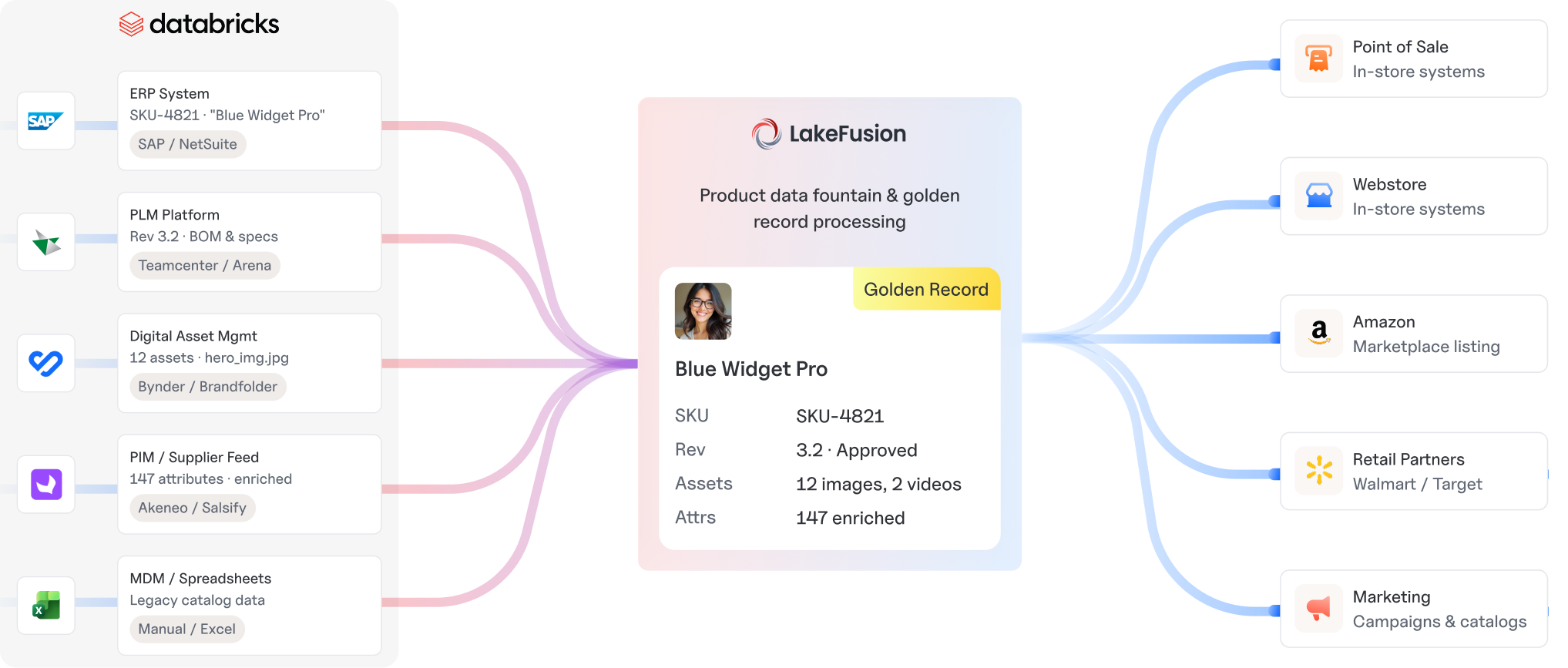

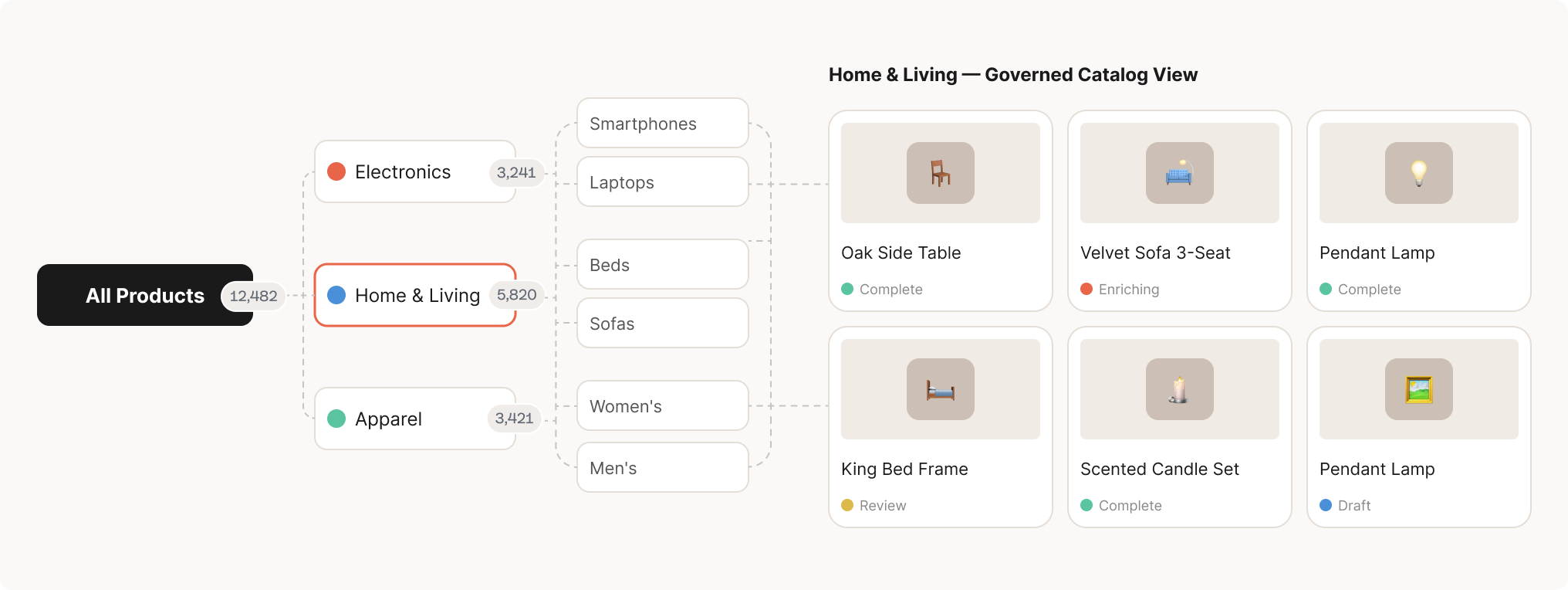

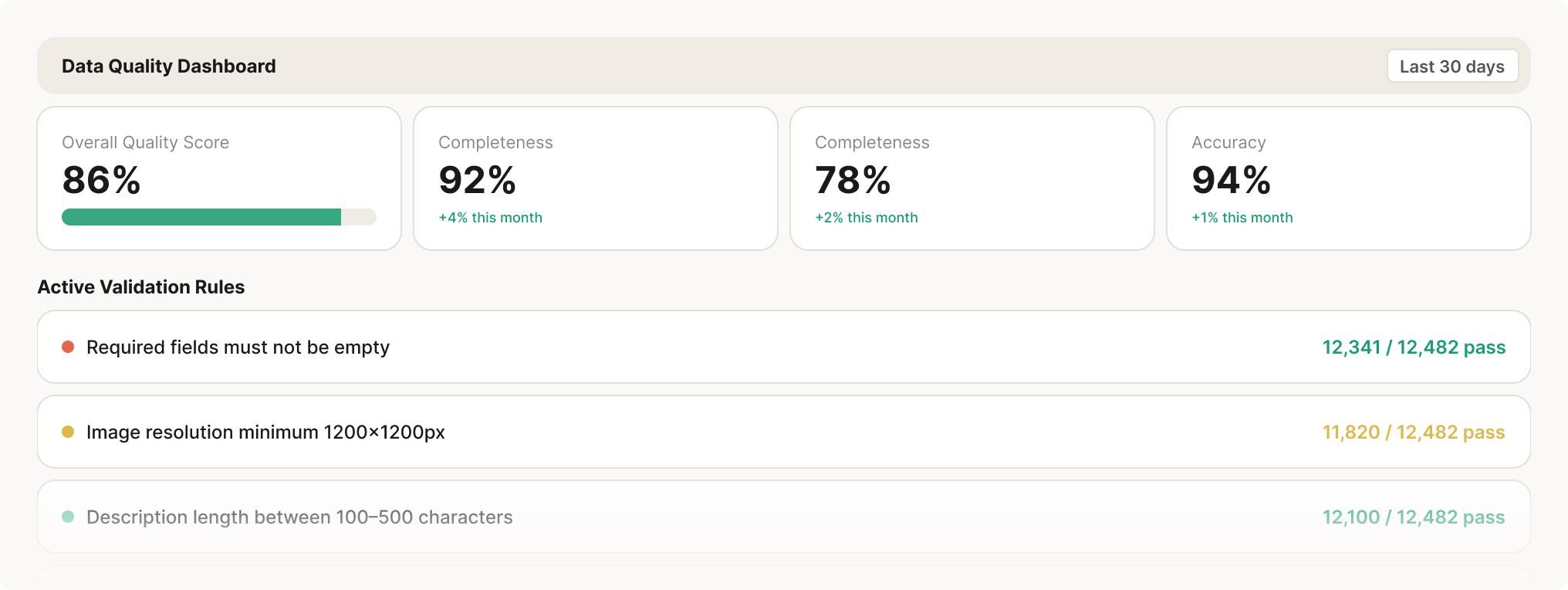

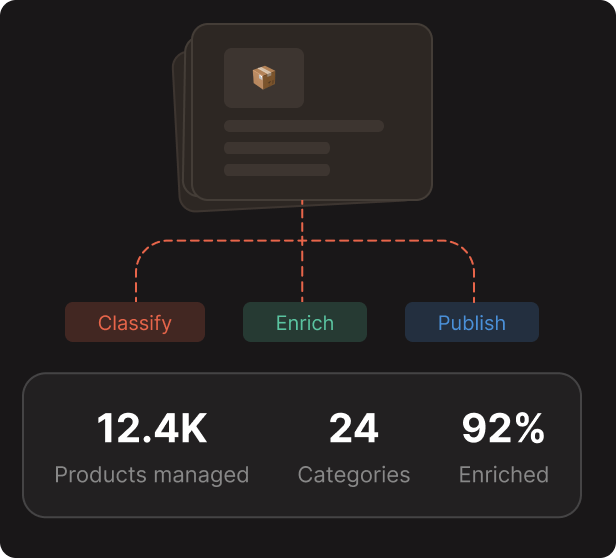

Bringing large, multi-category catalogs into a more governed structure so product data remains easier to manage, review, and operationalize.

Bringing large, multi-category catalogs into a more governed structure so product data remains easier to manage, review, and operationalize.

Bringing large, multi-category catalogs into a more governed structure so product data remains easier to manage, review, and operationalize.

Bringing large, multi-category catalogs into a more governed structure so product data remains easier to manage, review, and operationalize.

Bringing large, multi-category catalogs into a more governed structure so product data remains easier to manage, review, and operationalize.

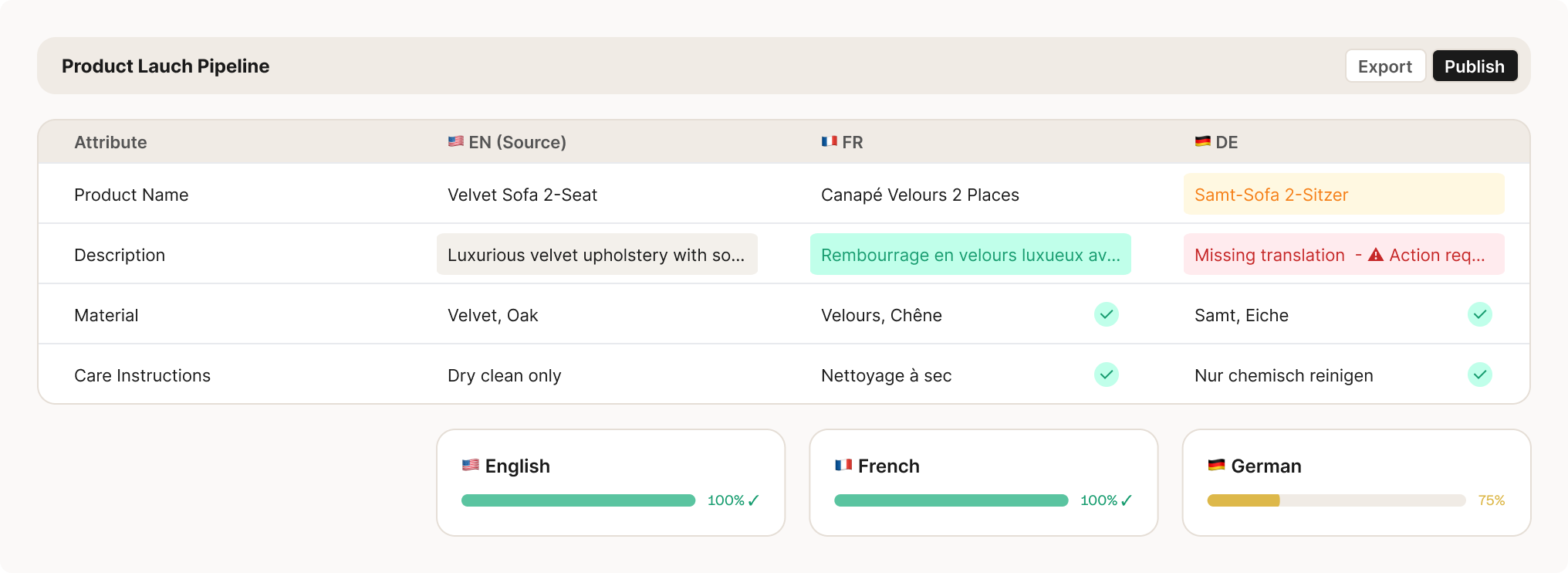

Use LLM-assisted workflows to translate titles, descriptions, attributes, and supporting product content more efficiently for regional teams and localized commerce experiences.

Generate product descriptions, marketing-ready summaries, and channel-specific copy automatically so teams can move faster from product data to publishable content.

Apply AI to matching, standardization, enrichment, classification, and content workflows while keeping product data closer to a governed enterprise foundation.

For a PIM implementation to succeed, everyone involved in product management from merchandising teams to governance stakeholders needs to be able to work easily in the same tool

Give each audience a customized view of the data, letting them focus on what's important to them without distractions

Our interfaces are designed to work for you, with all the elements laid out in a way that supports ongoing business operations

Our architecture was specifically designed to make it straightforward for business users to lay out your data model, manage your hierarchies, and review your catalog

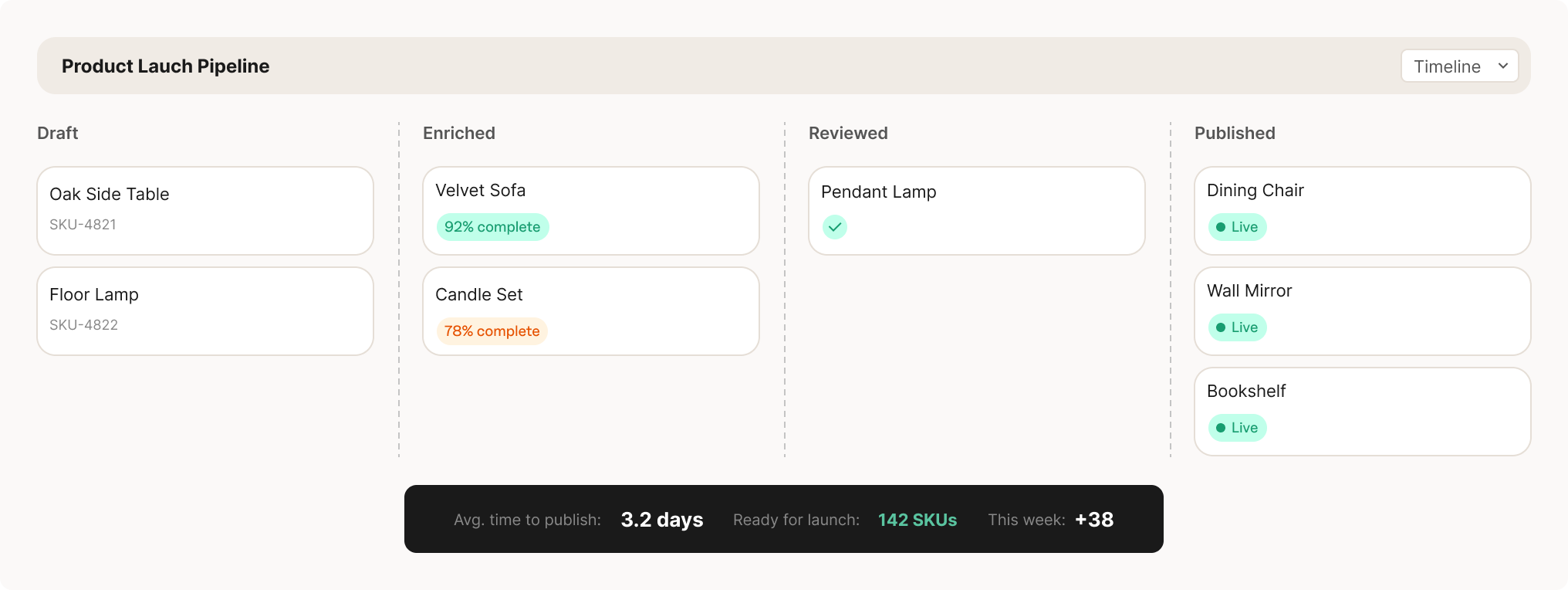

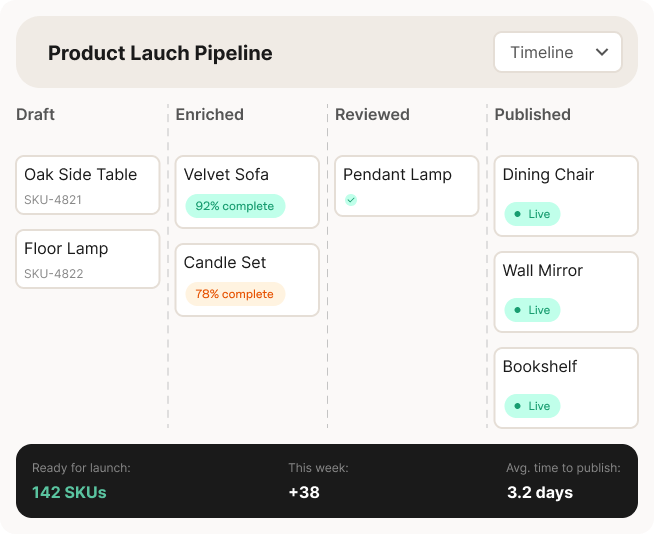

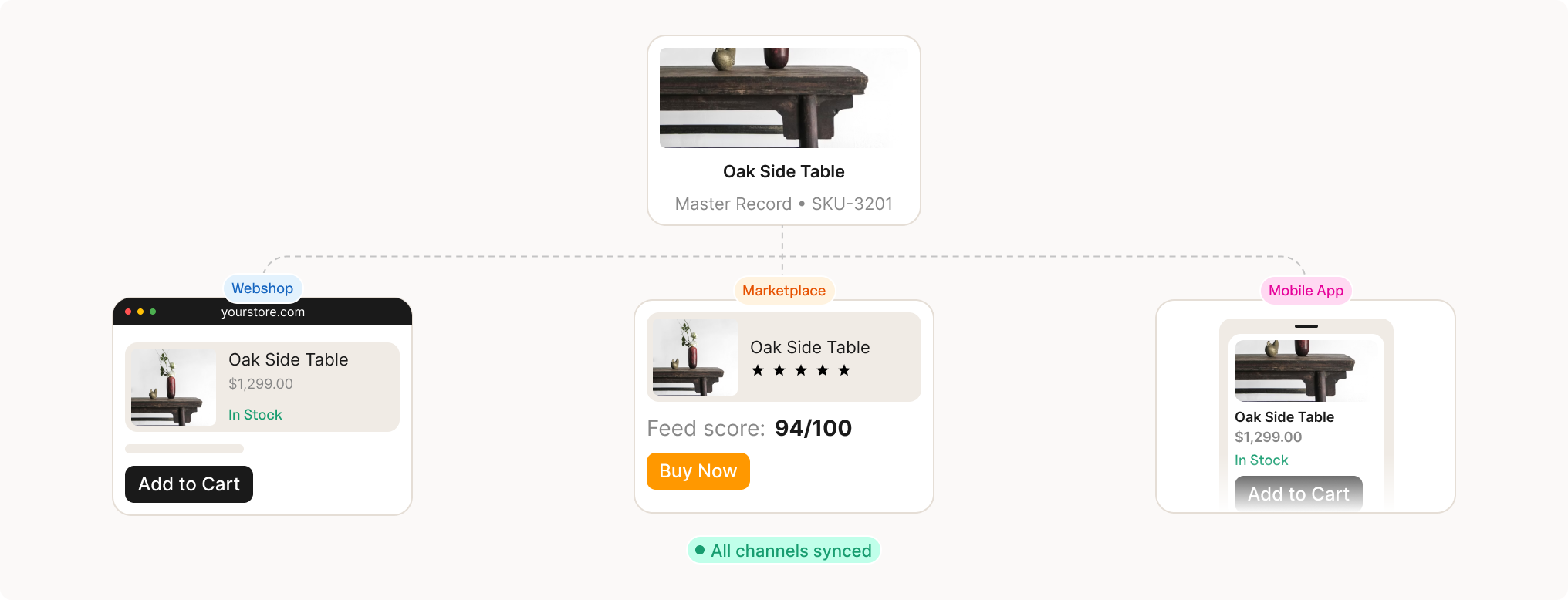

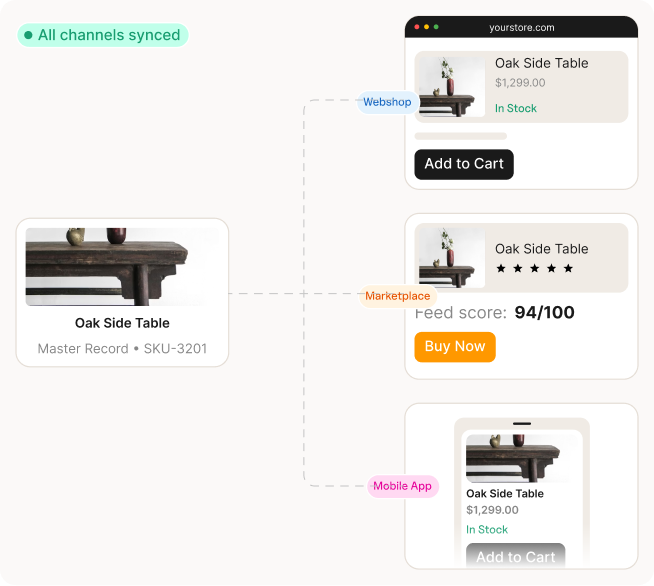

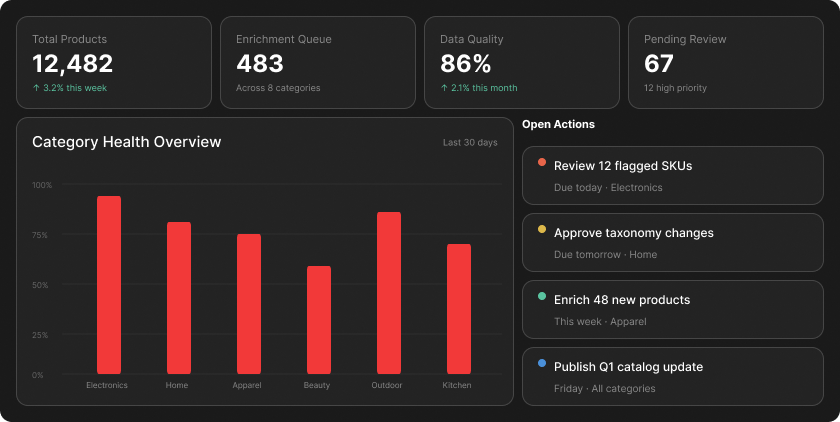

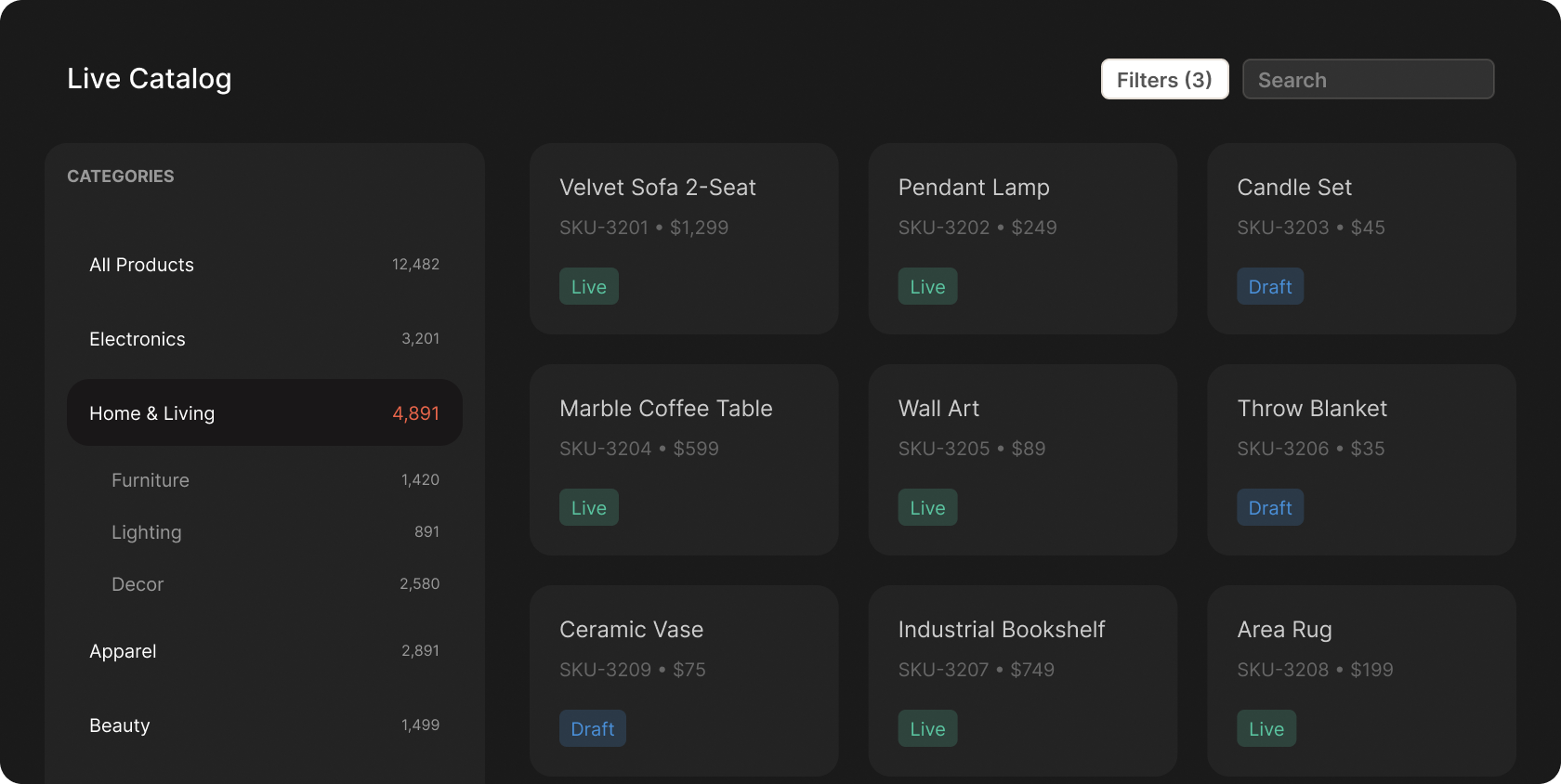

The video shows several working surfaces across the product. This section carries those into the landing page in a clearer, more structured way.

See daily priorities, recent activity, open actions, and category health from a single operational home screen.

Review products in flow, assign taxonomy, manage status, and keep the active catalog visible to the teams managing it.

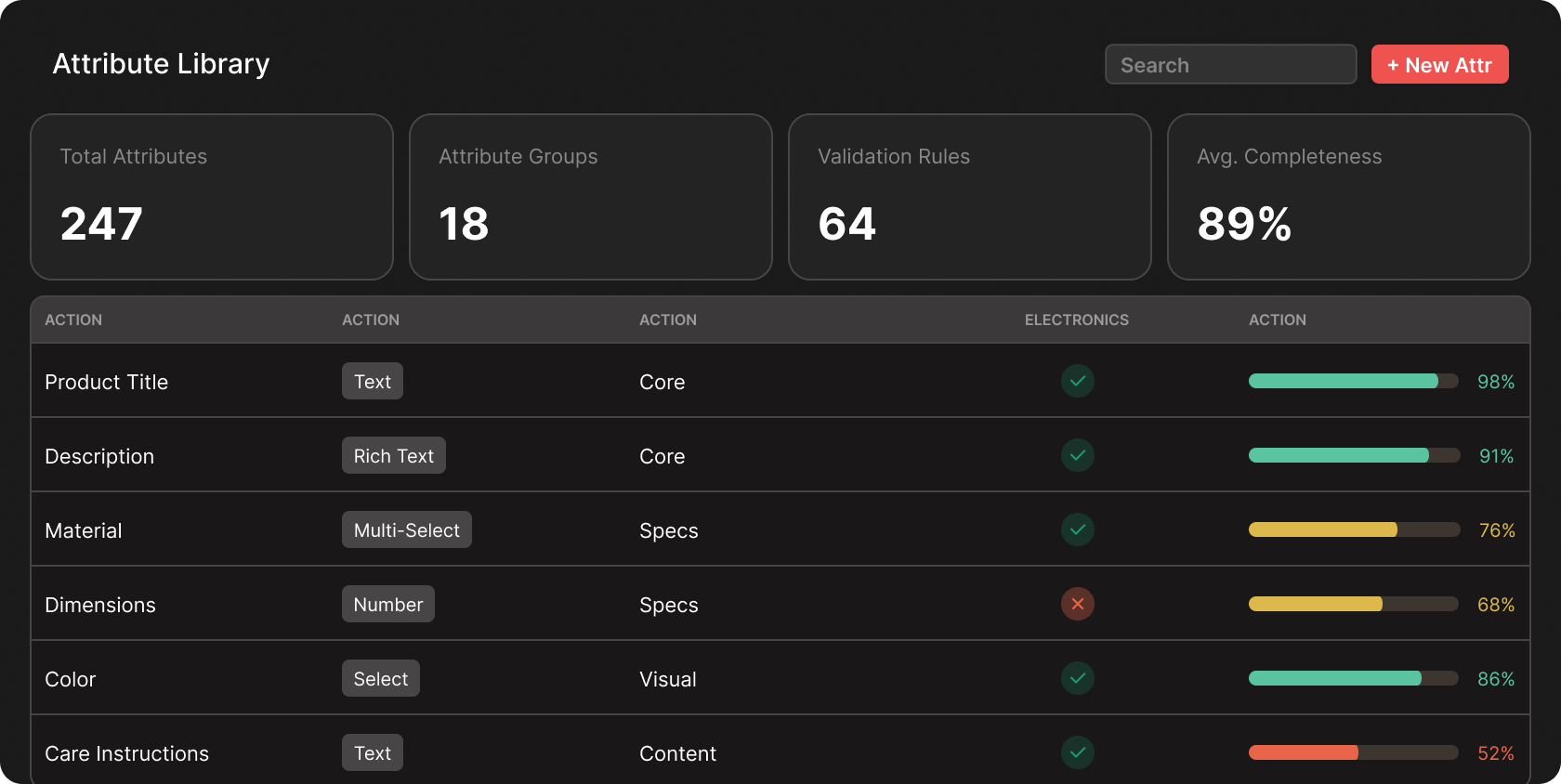

Maintain the underlying attribute model so governance and catalog operations remain connected through shared definitions.

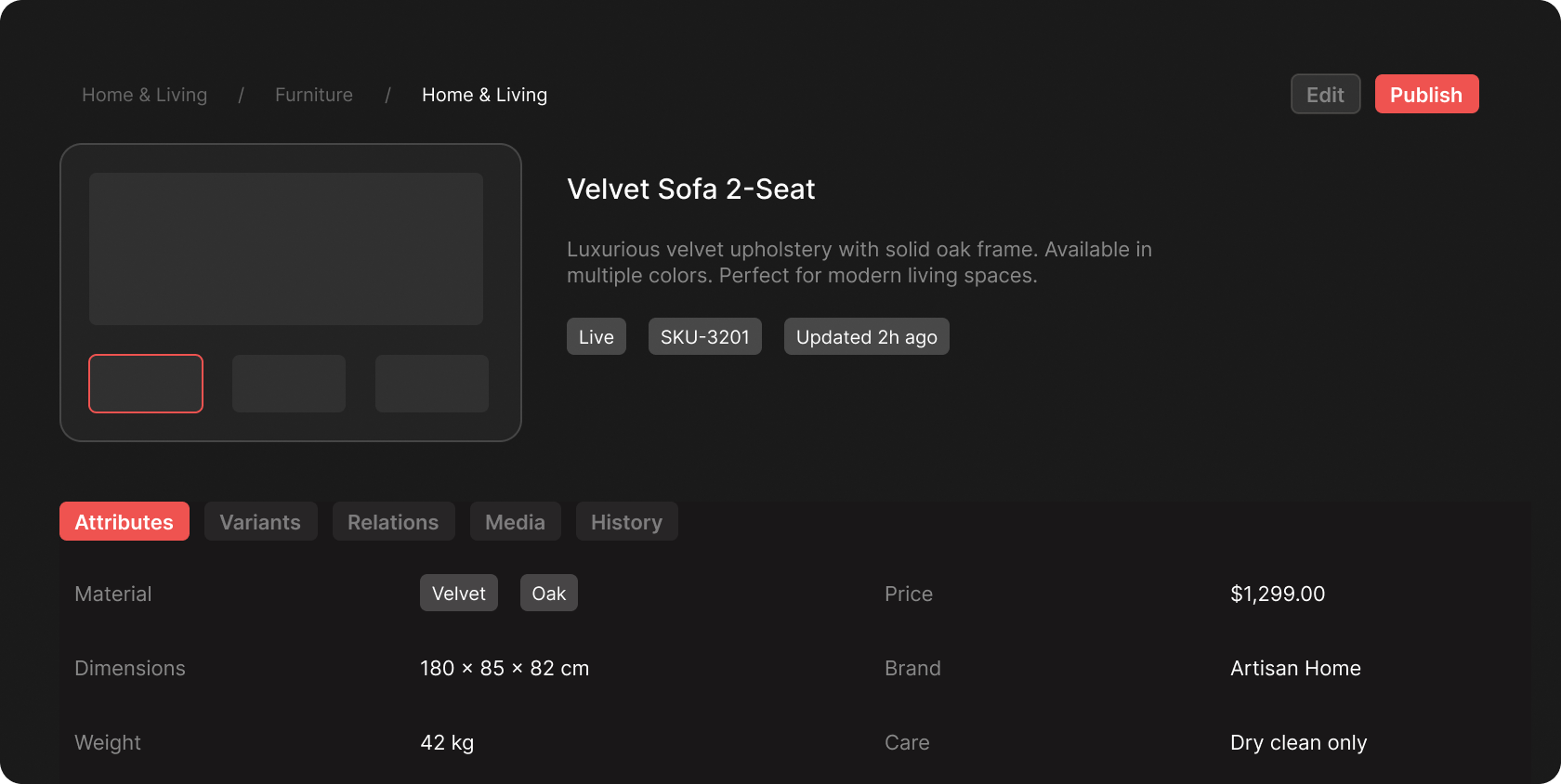

Edit and review product records with structured fields, completion status, and category-specific controls in one place.

This page is now better aligned to the enterprise teams most likely to care about the product story shown in the video.

Teams responsible for onboarding, classifying, enriching, and maintaining product records across categories and channels.

Operators who need a live catalog, faster SKU readiness, stronger taxonomy alignment, and more reliable product data.

Owners of controlled attributes, role- based access, auditability, and the business logic that keeps product data trustworthy.

Organizations that want product data management close to their governed data foundation, without introducing migration- heavy side systems.